The Duck That Fetches

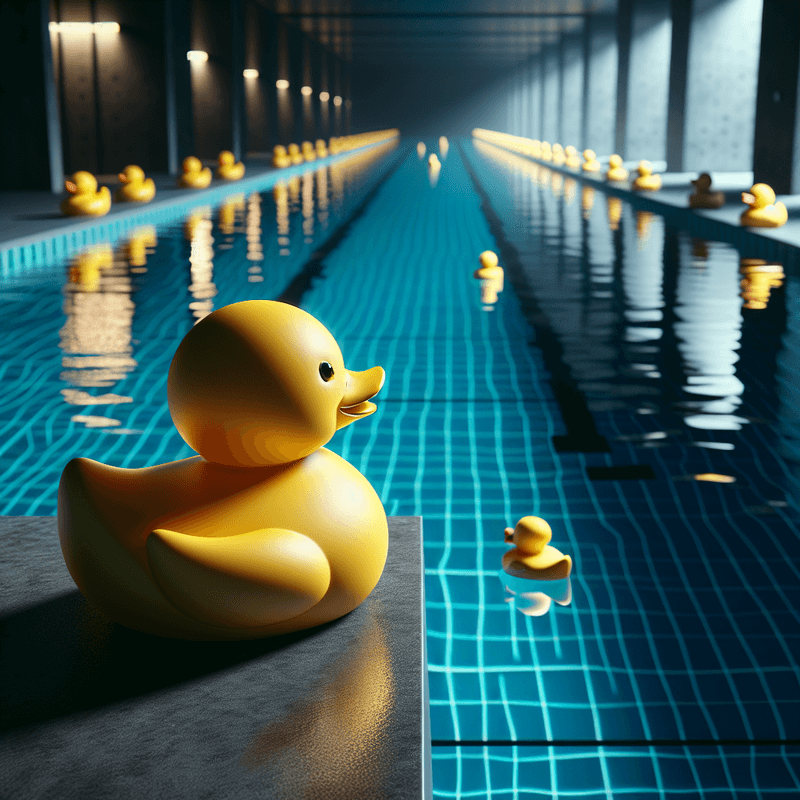

You don't want to jump in the pond yourself. You want to send a duck.

Training a Retriever

Imagine you've trained a duck. Not to swim — all ducks do that — but to retrieve. You call out a description, and the duck goes into the pond, finds the thing that matches your description, and brings it back.

This is useful. The pond is large. The water is cold. You have a lot of questions.

The duck has one special skill: it doesn't search by label. It doesn't read tags attached to the other ducks. It searches by resemblance. It finds the duck that feels most similar to what you described.

That distinction matters more than it seems.

What You Ask vs What You Mean

You call out: "find me something about invoices."

The duck dives in. It comes back with something about purchase orders.

Wrong? Maybe not. Purchase orders and invoices are closely related in semantic space — they float near each other. The duck did its job. It brought back something genuinely similar to what you asked. But it wasn't what you meant.

This is the core tension in retrieval. You communicate in language. The pond is indexed by meaning. The gap between the words you use and the meaning you intend is where retrieval goes wrong.

The Pond Is Your Documents

Stop thinking of the pond as an abstract space for a moment.

The pond is your documents. Every piece of text you've fed into the system — every email, report, contract, manual — is a duck floating in that pond. Positioned by meaning. Close to similar things. Far from unrelated things.

When you ask a question, the system converts your question into a position in the same space. Then it finds the documents floating nearest to that position and brings them back.

It's elegant. It works surprisingly well. And it has very specific failure modes.

When the Duck Gets It Wrong

The pond is crowded in the wrong places.

If your documents are all from one domain — say, finance — then everything floats in a small region of the pond. Invoice questions, budget questions, payroll questions are all close together. The duck retrieves the nearest thing, but "nearest" in a crowded pond still means "somewhat wrong."

You described the wrong thing.

You asked about "the process for approving leave." The answer lives in a document titled "Annual Leave Policy." But in semantic space, your question floats near documents about approving things in general — purchase orders, sign-off procedures, anything with "approval" in the meaning. The duck brings those back instead.

The right duck is too plain.

The document with the actual answer uses plain, functional language. It just says what it says. Nearby documents are more richly written, full of related terminology. They look more similar to your query than the real answer does. The duck grabs the dressed-up imposter.

Helping the Duck

You can't make the duck smarter about meaning — the pond positioning is what it is. But you can change how you describe what you want.

More context in the question helps. Instead of "leave approval process," try "what does an employee need to do to get annual leave approved by their manager." The richer description creates a more specific position in the pond. The duck has a sharper target.

You can also retrieve more than one duck. Bring back the five nearest, not just the nearest. Then decide which of those five actually answers the question. This is where a language model sitting above the retrieval step earns its keep — it reads what the duck brought back and picks the right one.

Retrieval Is Not Knowing

Here's the thing most people misunderstand about these systems.

The duck doesn't know anything. It retrieves. The knowledge lives in the documents, floating in the pond. The duck's job is to find the right ones. A language model's job is to read what the duck brought back and form an answer.

If the duck brings back the wrong documents, the answer will be wrong — even if the language model is excellent. The model can only work with what it's given.

Good retrieval is the foundation. Everything else depends on it.

The Takeaway

RAG systems are trained retriever ducks. They search a pond of your documents by semantic resemblance and bring back what seems closest to your question.

They fail when the pond is poorly indexed, when you describe the wrong thing, or when the right answer is buried under more-similar-looking wrong ones.

Understanding how the duck searches helps you understand why it comes back with what it does — and what you can do about it.

Part of the Duck Pool Experiments series - making abstract concepts concrete.