Five Hundred Dimensions

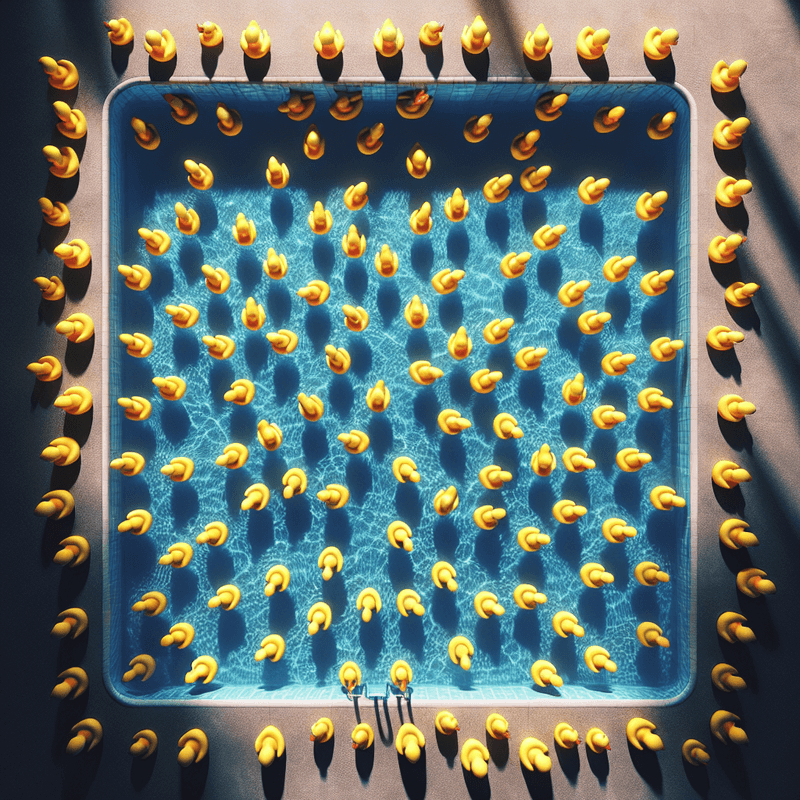

The pond in your head has two dimensions. Length and width. The ducks float on a flat surface.

The real pond has five hundred.

What You Can Picture

When we talk about semantic space — the pond where meaning lives — it's natural to picture something flat. A map. An X and a Y. Similar things close together, different things far apart.

That picture is useful. It's not wrong. But it's missing almost everything.

Real embedding models — the systems that convert text into positions in semantic space — don't use two dimensions. They use hundreds. Sometimes over a thousand. Each dimension captures a different aspect of meaning.

What Extra Dimensions Actually Do

Think about a single duck. In a two-dimensional pond, you can describe its position with two numbers. How far from the north edge. How far from the west edge.

Now imagine that duck also has a height above the water. That's a third dimension. You need three numbers to describe it fully.

Now imagine it also has a temperature. A colour intensity. A density. A direction of motion. A history of where it's been.

Each of those properties adds another dimension. Another axis along which two ducks can be similar or different.

In semantic space, words and phrases have hundreds of these properties — not physical ones, but meaning properties. How formal the language is. Whether it relates to time or to objects. Whether it implies causation. Whether it carries emotional weight. Whether it typically appears near technical vocabulary.

A single dimension can't capture all of that. Neither can two. You need many.

Why This Makes Similarity Complicated

In a flat pond, "close" means one thing. Two ducks are close if they're near each other on the surface.

In a high-dimensional pond, "close" is more nuanced. Two ducks might be very close on four hundred dimensions and very far apart on the other hundred. What does that mean?

It means they're similar in most respects and different in a few specific ones.

"Invoice" and "receipt" are close on most dimensions — both relate to financial transactions, formal documents, amounts of money. But they're far apart on the dimensions that capture direction of transaction and who holds the document. Close in general. Distinct in specifics.

A two-dimensional pond flattens all of that into one number. A high-dimensional pond preserves the structure.

What You Lose When You Flatten

Search Console, vector database visualisers, embedding explorers — they all show you a flat picture. Usually two dimensions, sometimes three. It looks tidy. You can see clusters. You can see which things are near which.

But that flat picture is a shadow of the real structure. Like looking at a sculpture from directly above and trying to understand its height.

The duck pool visualisations you've seen are useful but incomplete. They show you one projection of something with hundreds of facets. The actual structure — the thing the model uses when it retrieves — is far richer than what fits on a screen.

Why This Matters for Retrieval

When a retrieval system finds documents "similar" to your query, it's measuring distance in that high-dimensional space. Not on a flat map. Across all five hundred axes simultaneously.

This is why it sometimes finds things that seem surprisingly relevant even when the words don't match. The documents are close in the dimensions that capture meaning, even if they're written very differently.

And it's why it sometimes misses things that seem obvious. The document you want might use different vocabulary — it's far away on the word-level dimensions — even though it's close on the concept-level dimensions. The overall distance is a compromise across all axes.

The Upside

Five hundred dimensions sounds unwieldy. In practice, it's what makes semantic search work at all.

Two dimensions could tell you that "dog" and "cat" are both animals. Five hundred dimensions can also tell you that "dog" and "loyal" share something, that "cat" and "independent" share something, that both are different from "carburetor" in ways that go beyond simple category membership.

The richness of the space is what allows it to capture the richness of meaning.

The Takeaway

The duck pool has more structure than you can visualise. When you see a two-dimensional plot of embeddings, you're seeing a shadow of a space with hundreds of axes.

Each axis captures something about meaning. Similarity is measured across all of them at once.

This is why semantic search finds things you didn't expect — and misses things you thought were obvious. It's navigating a space you can't see, following distances you can't easily intuit.

The pond is deeper, wider, and stranger than it looks.

Part of the Duck Pool Experiments series - making abstract concepts concrete.